Following the success of previous editions, Criteo AI Lab is co-organizing the joint AdKDD & TargetAd workshop in conjunction with KDD 2017. The Workshop aims to bring together researchers and industrial practitioners in computational advertising.

This year the workshop features a strong program with 4 invited speakers including Thorsten Joachims (Cornell University), Susan Athey (Stanford), Alex Smola (Amazon) and Randall Lewis (Netflix). To highlight the most impactful work, Criteo AI Lab is also sponsoring the Best Paper Award.

Two contributions from Criteo AI Lab come to complete this rich agenda:

- Attribution Modeling Increases Efficiency of Bidding in Display Advertising from Eustache Diemert, Julien Meynet (Criteo AI Lab), Pierre Galland (Criteo) and Damien Lefortier(Facebook)

- Cost-sensitive Learning for Utility Optimization in Online Advertising Auctions from Flavian Vasile (Criteo AI Lab), Damien Lefortier (Facebook), Olivier Chapelle (Google)

Attribution Modeling Increases Efficiency of Bidding in Display Advertising

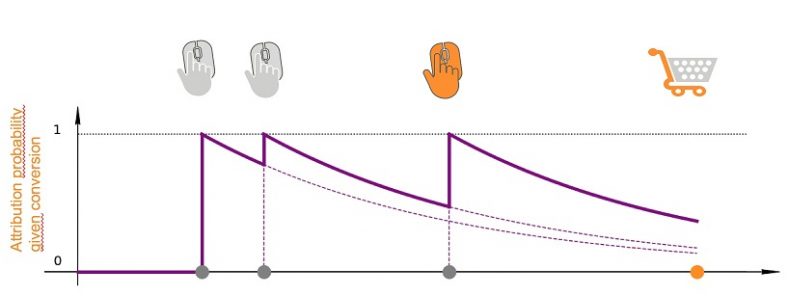

In performance advertising, attribution is the process of determining how to assign credit to touchpoints in conversion paths. Evaluating the true causal impact of each touchpoint on the conversion is challenging task, and various approaches were proposed in the literature to model attribution. However, the industry standard is still to give full credit to the last click before the conversion.

Then numerous previous studies focused on accurately estimating click or conversion probabilities at scale and open datasets were published for this task. But most of these published studies do not explicitly model the attribution process and we claim the resulting baseline bidding strategy shows some inefficiencies.

In this paper we bridge the gap between the two research directions: how can we incorporate a proper attribution model into a more efficient bidding strategy? The main difference between the proposed bidder and the baseline bidder is the bidding behavior right after a click. While the baseline bidder will consider the click as a strong user engagement signal, and thus increase the bid values after a click so as not to lose the last click, our proposed bidder will drastically decrease the bid right after a click. Actually, the click already brings a high probability of getting the attribution would there be a conversion. We claim a better strategy is thus to reduce post-click user exposure and reinvest the budget on users for which the impact of display advertising is higher.

In the paper, we provide details on the attribution model we use, and how it is incorporated into the bidder. We also propose to extend the utility metric used for offline evaluation, in order to make it applicable for our attribution-aware bidder. We report offline results on a dataset that we will be soon release publicly, and online A/B testing results showing an uplift of 5.5% compared to baseline production bidder.

Cost-sensitive Learning for Utility Optimization in Online Advertising Auctions

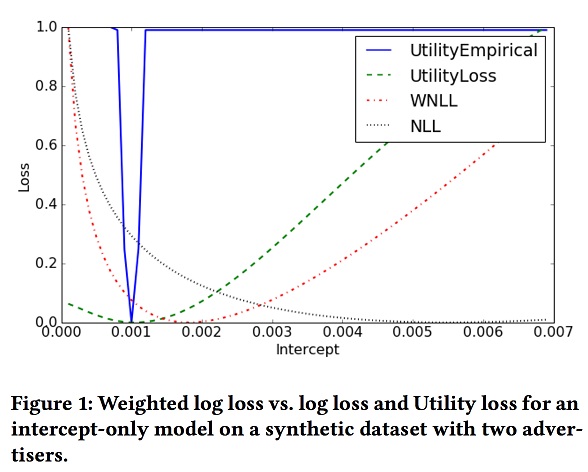

One of the most challenging problems in computational advertising is the prediction of click-through and conversion rates for bidding in online advertising auctions. An unaddressed problem is the existence of highly non-uniform costs for wrong predictions. While for model evaluation these costs have been taken into account through recently proposed business-aware offline metrics – such as the Utility metric which measures the impact on advertiser profit in second price auctions – this is not the case when training the models. In our paper, to bridge the gap, we formally analyze the relationship between optimizing the Utility metric and the log loss, which is considered as the state-of-the-art approach in conversion modelling.

We show that for the case where the competing bid is equal/very close to our bid and the conversion probabilities are small (p<<1) the derivatives of the Utility loss and the log loss are approximately equal, up to a factor v. This motivates the idea of weighting the log loss with the business value of the predicted outcome (e.g. by the campaign-level estimate of the Cost-Per-Acquisition CPA).

As shown in the figure below, the log loss weighted by CPA (WNLL) has a minimum much closer to the empirical Utility loss than the unweighted log loss (NLL):

In the second part of the paper we give details on how to practically optimize for the weighted log loss and show that the new proposed method can lead to significant gains in offline and online performance.

If you find the above work interesting & are attending KDD 2017, make sure to stop by live presentations on August 14 or talk to us at the Criteo AI Lab booth. We look forward to seeing you in Halifax.